|

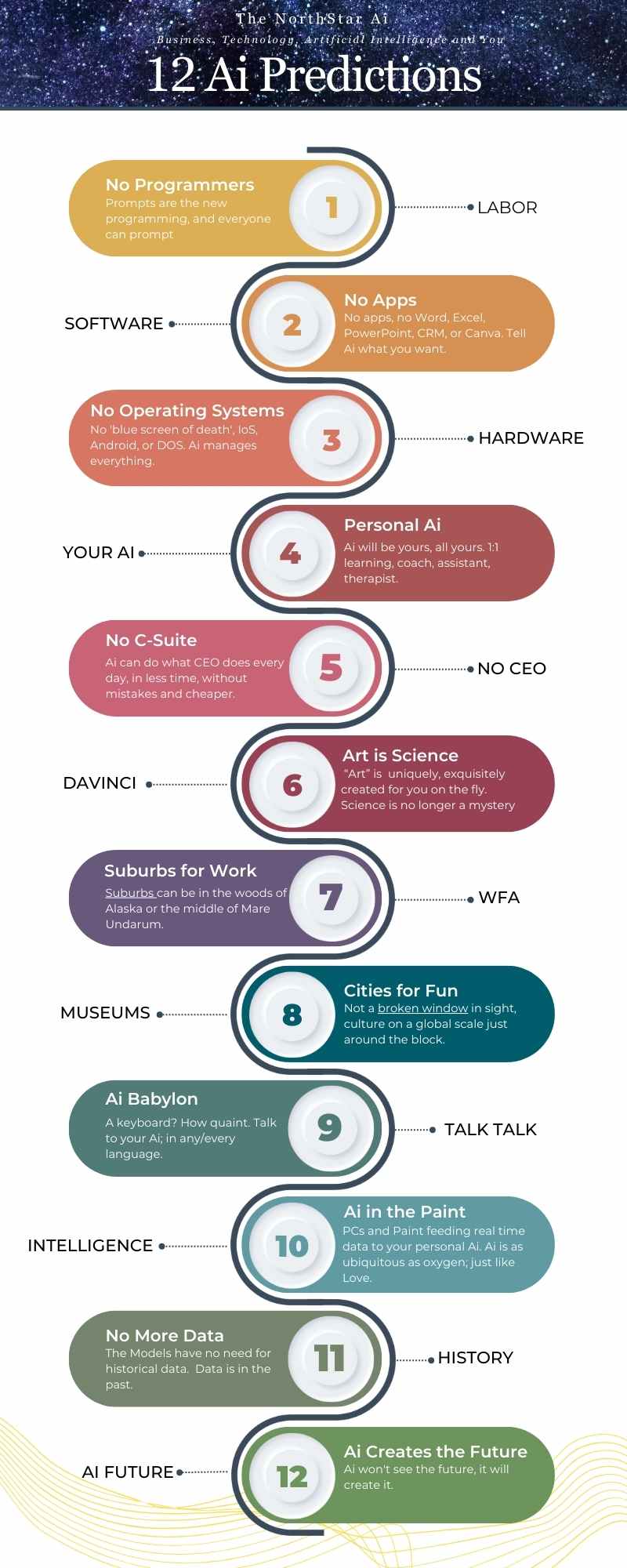

By Greg Walters Data will be proven Redundant & Historical

This concept is illustrated in the RAG model, where data even a second old is considered obsolete. What's more, the quest for data will be satiated as sensors digest new models and information in real time - we are moving from 'retrieval' computing to 'generational compute', on an individual basis - human to human. My notion is a world without data, at least in the contemporary manner. Yes, Ai will create 'synthetic' data and train itself - but - my contention is that as GAi connects to the real world through sensors, developing all six of the human senses, data is no longer historic - the move from retrieval to generative computing takes stage as inputs and outputs or continuous and in real time, avoiding the 'echo chamber'. The impact is stupendous. Ai, through personal LLMs, will feel more human than human, offering advice on everything from predicting the weather, cooking dinner to personal, on demand therapy. GPT:

"Recent discussions have raised concerns about the increasing demand for high-quality data, which is crucial for powering advanced artificial intelligence (AI) conversational tools like OpenAI’s ChatGPT. As AI continues to evolve, the appetite for such data grows, posing the question: this demand will soon outstrip supply, potentially stalling AI progress. Analysis Despite these concerns, a paradigm shift in how AI utilizes data is unfolding. Traditionally, AI systems relied heavily on vast historical data sets to learn and make predictions. However, the rapid advancement in AI technologies has begun to change this dependency. As AI models become more sophisticated, the necessity for perpetual data accumulation diminishes. Instead, these models leverage existing information more efficiently and adapt dynamically to new data."

0 Comments

Your comment will be posted after it is approved.

Leave a Reply. |

Topics & Writers

All

AuthorsGreg Walters Archives

April 2024

|